What to Fix Before Adding AI to Your Maintenance Workflows

What to do before implementing AI maintenance is a question most facilities ask only after deploying predictive tools onto unstable foundations and getting noisy, unreliable outputs in return. Only 12% of organizations have data of sufficient quality and accessibility for AI, and 62% cite data governance as their top AI challenge. In maintenance, those problems are fixable before AI enters the picture.

With that in mind, this article covers what to do before implementing AI maintenance, including data preparation, compliance, and how a fully integrated CMMS+ (like the one found in Llumin) can make that process easier.

AI Only Works If Your Maintenance Foundation Is Strong

In the world of maintenance, AI readiness depends on structured, consistent data generated by disciplined, daily execution. Predictive tools learn from historical patterns, and if those patterns are incomplete, inconsistently recorded, or fragmented across disconnected systems, the AI produces no useful insights.

AI Readiness Gaps Across Industries:

| Readiness Factor | % Unprepared |

|---|---|

| Data quality and accessibility | 46% |

| Employee skills and training | 60% |

| Data governance | 62% cite as top AI challenge |

| Maintenance processes (efficient) | 93% not very efficient |

| CMMS implementations succeeding | 20-40% succeed |

The data reflects this; 93% of companies consider their maintenance processes not very efficient, and we know that about 60-80% of CMMS implementations fail due to poor engagement, unclear goals, and lack of management support. Regardless of how advanced the AI system being put in place is, it will never fully operate to standard if these obstacles remain.

6 Things to Do Before Implementing AI-Driven Maintenance

Preparing maintenance data for AI means addressing the six most common foundational weaknesses before predictive tools enter your workflows.

AI Readiness Checklist Overview:

| Fix | What’s at Stake | Estimarted ROI |

|---|---|---|

| Clean up work order records | Incomplete history = poor predictions | 15-25% downtime reduction |

| Standardize asset hierarchy | Models can’t find patterns in messy data | 20-30% predictive accuracy |

| Improve PM compliance | Erratic baselines = erratic alerts | -30-40% False alarms |

| Reduce reactive culture | Chaotic data can’t train reliable models | 15-25% reduction in emergency labor costs |

| Eliminate disconnected systems | Fragmented data weakens every output | 20-30% faster decisioning |

| Address workflow consistency | Inconsistent inputs = unreliable outputs | 10-15% reduction in rework |

1) Clean Up Incomplete Work Order Records

AI in maintenance relies on historical patterns. When work order records carry missing failure codes, vague close-out notes, or blank cause fields, predictive models train on gaps rather than reality. The output is either generic alerts based on incomplete failure libraries or confident predictions built on incorrect patterns.

Preparing maintenance data for AI starts with enforcing maintenance data integrity on every work order before closing, including failure codes, root causes, actions taken, and parts used.

Work Order Data Quality Requirements:

| Field | Common Gap | How to Fix |

|---|---|---|

| Failure code | Often left blank or vague | – Make field mandatory at work order closure – Provide dropdown menu with specific, pre-defined codes |

| Root cause | Skipped under time pressure | – Require root cause entry before work order can be closed – Tie completion metrics to quality, not just speed |

| Parts used | Not linked to work order | – Require parts entry before closure – Auto-populate from storeroom withdrawals where possible |

| Close-out notes | “Fixed” with no detail | – Enforce minimum 25-character count – Provide guided prompts (“What failed? What was replaced?) |

| Asset ID | Entered inconsistently | – Implement QR code/barcode scanning on all assets – Standardize naming conventions and lock changes to admin |

2) Standardize Your Asset Hierarchy Structure

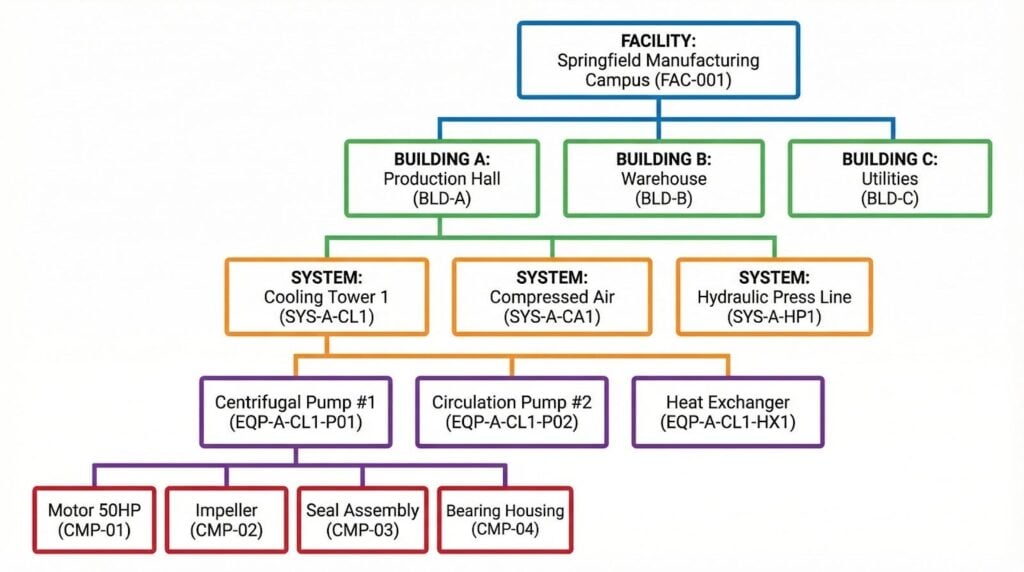

If the same pump appears under three different names across three systems, AI cannot detect patterns across its failure history. A clear asset hierarchy structure with consistent parent-child relationships from facility to system to component gives predictive models the anchor they need to identify meaningful trends.

Asset Hierarchy Structure Example

LLumin’s ReadyAsset provides a structured asset registry that captures equipment relationships, lifecycle data, and maintenance history in a single record. This record enables reliable pattern recognition across an entire fleet rather than isolated individual assets.

3) Improve Preventive Maintenance Compliance

Poor preventive maintenance compliance produces two problems for AI:

- Creates erratic execution data that doesn’t represent consistent asset behavior

- Leaves gaps in failure history that make baseline calibration unreliable.

PM compliance should be 90% or higher before predictive logic builds on top of it, yet 59% of facilities dedicate less than half their total maintenance time to planned work. Maintenance process standardization before AI means consistent PM execution tracked through work order automation rather than paper records or informal completion tracking.

PM Compliance Benchmarks

| Metric | Industry Average | AI-Ready Target |

|---|---|---|

| PM compliance rate | <60% (typical) | 90%+ |

| Planned Maintenance % (PMP) | Below 85% for most | 85%+ |

| Time dedicated to planned work | <40% for 59% of facilities | >60% |

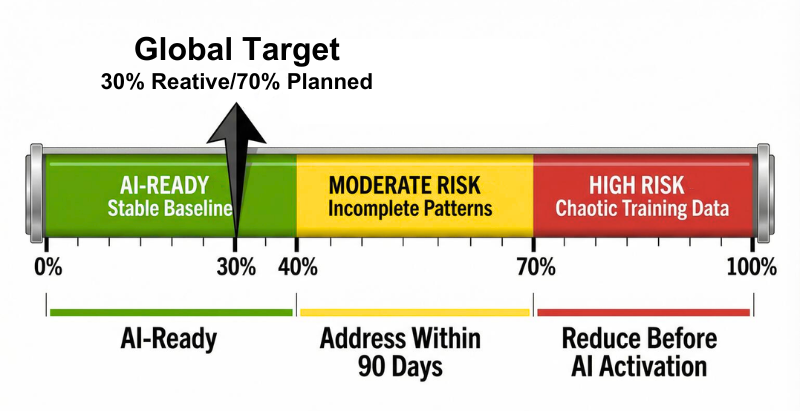

4) Reduce Reactive Maintenance Culture

A crisis-driven environment generates chaotic data. When the majority of work orders are emergency responses, predictive models trained on that history learn to expect chaos rather than detect patterns within it.

Fixing maintenance workflows before AI adoption means stabilizing planning and scheduling practices so that AI has a representative baseline to compare against. Only 28% of facilities currently track their reactive-to-planned ratio. It is the single most revealing metric for maintenance program health, yet it’s essential for assessing AI readiness in maintenance workflows.

Reactive Culture Risk to AI

5) Eliminate Disconnected Maintenance Systems

When asset records live in one system, work orders in another, and inventory in a spreadsheet, AI doesn’t have a unified data stream to analyze. Disconnected maintenance systems produce fragmented histories, duplicate records, and data conflicts that undermine maintenance data integrity across every input.

AI implementation readiness in maintenance requires a centralized digital source of truth before predictive capabilities are layered on top. LLumin CMMS+ consolidates several of these capabilities into a single operational platform, eliminating the fragmentation that degrades predictive model quality:

6) Address Technician Workflow Consistency

Technician workflow consistency determines whether maintenance data across shifts and sites is comparable. If one technician codes a bearing failure as “mechanical,” while another codes it as a “vibration,” and a third leaves the field blank, the AI model sees three different events where there was one pattern. Inconsistent inputs produce unreliable outputs regardless of model sophistication.

Maintenance reporting accuracy requires standardized execution practices reinforced at the workflow level. These practices include mandatory fields, dropdown failure codes, and mobile-accessible work order templates that guide consistent documentation without adding administrative burden.

How LLumin CMMS+ Strengthens AI Readiness

Often, the best means to fix your maintenance infrastructure before implementing AI is through a centralized CMMS+ platform like the one found in Llumin. This is because a fully integrated CMMS will:

- Build AI readiness in maintenance workflows as a byproduct of daily operations

- Enforce workflow documentation standards at the work order level

- Centralize asset hierarchy structure

- Connect condition monitoring and telematics into one platform

- Ensure that preparing maintenance data for AI happens continuously

Rather than layering predictive maintenance analytics onto fragmented processes, LLumin strengthens maintenance data integrity and enforces maintenance process standardization before AI. When AI capabilities activate, they build on structured, reliable inputs.

Explore LLumin’s AI in maintenance management e-book for a deeper look at building programs that are genuinely AI-ready.

Fix the Foundation, Then Add Intelligence with LLumin CMMS+

Knowing what to do before implementing AI maintenance is about strengthening existing processes so predictive capabilities become actionable rather than noisy. When maintenance workflows are standardized, documented, and consistent, AI delivers the 73% failure reduction and 30-50% downtime improvement that the research supports.

Book your free demo to see how LLumin CMMS+ helps fix the foundation before adding AI to your maintenance workflows. We also encourage you to use our free CMMS ROI calculator and our MTTR ROI calculator to quantify what that foundation is worth for your operation.

Frequently Asked Questions

Is my maintenance team ready for AI?

Most aren’t, at least not yet. Only 12% of organizations have data of sufficient quality and accessibility for AI, and 93% of companies consider their maintenance processes not very efficient. The honest answer requires auditing six specific areas, including work order documentation completeness, asset hierarchy consistency, PM compliance rate, reactive-to-planned ratio, system consolidation, and technician workflow standardization.

What do I need to do before using AI in maintenance?

What to do before implementing AI maintenance comes down to six fixes. Clean up incomplete work order records by enforcing mandatory failure codes and close-out documentation, standardize your asset hierarchy with consistent naming and parent-child structure, raise PM compliance to 90%+, reduce reactive work below 30% of total work orders, consolidate disconnected systems into a single CMMS, and standardize technician logging practices across all shifts and sites.

Does AI make maintenance workflows worse?

It can, if deployed before foundational problems are resolved. AI amplifies whatever inputs it receives, and poor data produces poor predictions. High false positive rates will erode technician trust, and alert fatigue from uncalibrated thresholds will turn predictive tools into noise generators. 60-80% of CMMS implementations fail for the same underlying reasons AI fails: poor user engagement, unclear goals, and lack of process discipline.

Is my maintenance data good enough for AI?

Probably not without remediation. The benchmarks for AI-ready maintenance data integrity are specific: failure codes present on 90%+ of closed work orders, consistent asset naming across all records, PM history covering at least 6-12 months of regular execution, and work orders linked to specific assets rather than general equipment categories. If your data falls short on any of these, AI models will either fail to detect meaningful patterns or generate alerts calibrated to distorted baselines.

How do I prepare my maintenance team for AI?

Prepare teams by addressing adoption barriers before go-live, not after. Communicate explicitly that AI is decision support, not a replacement tool. Invest in role-specific training, and involve technicians in threshold calibration and pilot designs so they feel ownership rather than imposition. Choose a pilot with a clear, visible win, such as equipment with documented recurring failures where an avoided breakdown is immediately noticeable. Finally, track maintenance KPIs at the technician level from day one so improvements are visible to the people doing the work, not only to leadership.

Ed Garibian, founder, and CEO of LLumin Inc., is an experienced executive and entrepreneur with demonstrated success building award-winning, growth-focused software companies. He has an impressive track record with enterprise software and entrepreneurship and is an innovator in machine maintenance, asset management, and IoT technologies.